How USCIS Powered a Digital Transition to eProcessing with Kafka (Rob Brown & Robert Cole, US Citizenship and Immigration Services) Kafka Summit 2020

Last year, U.S. Citizenship and Immigration Services (USCIS) adopted a new strategy to accelerate our transition to a digital business model. This eProcessing strategy connects previously siloed technology systems to provide a complete digital experience that will shorten decision timelines, increase transparency, and more efficiently handle the 8 million requests for immigration benefits the agency receives each year.

To pursue this strategy effectively, we had to rethink and overhaul our IT landscape, one that has much in common with those other large enterprises in both the public and private sectors. We had to move away from antiquated ETL processes and overnight batch processing. And we needed to move away from the jumble of ESB, message queues, and spaghetti-stringed direct connections that were used for interservice communication.

Today, eProcessing is powered by real-time event streaming with Apache Kafka and Confluent Platform. We are building out our data mesh with microservices, CDC, and an event-driven architecture. This common core platform has reduced the cognitive load on development teams, who can now spend more time on delivering quality code and new features, less on DevSecOps and infrastructure activities. As teams have started to align around this platform, a culture of reusability has grown. We’ve seen a reduction in duplication of effort -- in some cases by up to 50% -- across the organization from case management to risk and fraud.

Join us at this session where we will share how we:

Used skunkworks projects early on to build internal knowledge and set the stage for the eProcessing form factory that would drive the digital transition at USCIS

Aggregated disparate systems around a common event-streaming platform that enables greater control without stifling innovation

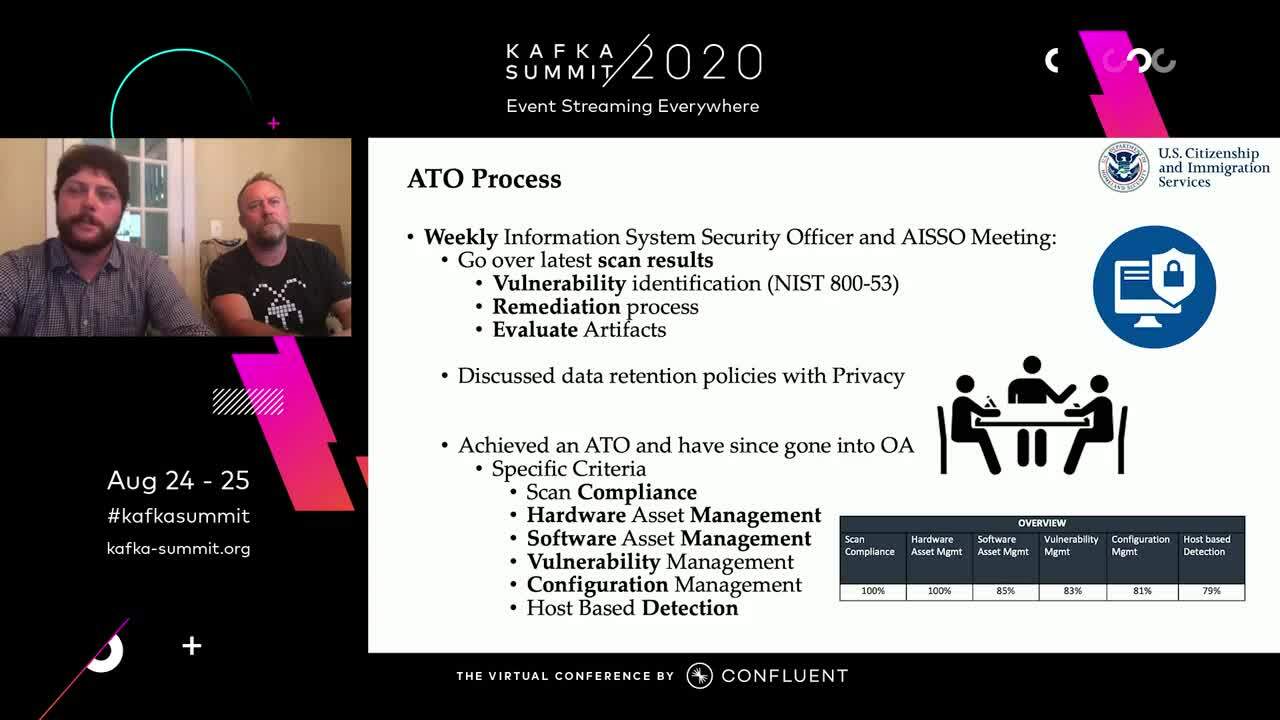

Ensured compliance with FIPS 140-2 and other security standards that we are bound by

Developed working agreements that clearly defined the type of data a topic would contain, including any personally identifiable information requiring additional measures

Simplified onboarding and restricted jumpbox access with Jenkins jobs that can be used to create topics in dev and other environments

Implemented distributed tracing across all topics to track payloads throughout our entire domain structure

Started using KSQL to build streaming apps that extract relevant data from topics among other use cases

Supported grassroots efforts to increase use of the platform and foster cross-team communities that collaborate to increase reuse and minimize duplicated effort

Established a roadmap for federation with other agencies, that includes replacing SOAP, SFTP, and other outdata data-sharing approaches with Kafka event streaming

To pursue this strategy effectively, we had to rethink and overhaul our IT landscape, one that has much in common with those other large enterprises in both the public and private sectors. We had to move away from antiquated ETL processes and overnight batch processing. And we needed to move away from the jumble of ESB, message queues, and spaghetti-stringed direct connections that were used for interservice communication.

Today, eProcessing is powered by real-time event streaming with Apache Kafka and Confluent Platform. We are building out our data mesh with microservices, CDC, and an event-driven architecture. This common core platform has reduced the cognitive load on development teams, who can now spend more time on delivering quality code and new features, less on DevSecOps and infrastructure activities. As teams have started to align around this platform, a culture of reusability has grown. We’ve seen a reduction in duplication of effort -- in some cases by up to 50% -- across the organization from case management to risk and fraud.

Join us at this session where we will share how we:

Used skunkworks projects early on to build internal knowledge and set the stage for the eProcessing form factory that would drive the digital transition at USCIS

Aggregated disparate systems around a common event-streaming platform that enables greater control without stifling innovation

Ensured compliance with FIPS 140-2 and other security standards that we are bound by

Developed working agreements that clearly defined the type of data a topic would contain, including any personally identifiable information requiring additional measures

Simplified onboarding and restricted jumpbox access with Jenkins jobs that can be used to create topics in dev and other environments

Implemented distributed tracing across all topics to track payloads throughout our entire domain structure

Started using KSQL to build streaming apps that extract relevant data from topics among other use cases

Supported grassroots efforts to increase use of the platform and foster cross-team communities that collaborate to increase reuse and minimize duplicated effort

Established a roadmap for federation with other agencies, that includes replacing SOAP, SFTP, and other outdata data-sharing approaches with Kafka event streaming